Contents

- 1Introduction

- 1.1Disclaimer

- 2Updates

- 2.1.1OBS V23 - 19th February 2019

- 3Contents

- 4VMAF

- 4.1History

- 4.2How it works

- 4.3Examples

- 4.4What to expect

- 5x264

- 5.1History

- 5.2Results

- 5.3Conclusions for x264

- 6x265

- 6.1History

- 6.2Results

- 6.3Conclusions for x265

- 7QuickSync

- 7.1History

- 7.2Results

- 7.3Conclusions for QuickSync

- 8NVENC part 1

- 8.1History

- 8.2Results

- 8.3Special note for NVENC Part 1

- 8.4Conclusions for NVENC Part 1

- 8.4.1Kepler

- 8.4.2Decisions decisions...

- 8.4.3Final thoughts on Maxwell and Pascal H.264 AVC

- 9NVENC Part 2

- 9.1History

- 9.2Results

- 9.3Conclusions for NVENC Part 2

- 9.3.1H.264 AVC and live streaming

- 9.3.2H.265 HEVC and offline encoding

- 10VP9

- 10.1History

- 10.2Results

- 10.3Conclusions for VP9

- 11AV1

- 11.1History

- 11.2Results

- 11.2.1Additional

- 11.3Conclusions for AV1

- 12Final thoughts

VMAF

History

Netflix has a financial interest in knowing how much quality they can deliver given certain constraints. Users may have equipment that can efficiently play some codecs but not others. They may also have limited bandwidth. Netflix needs to know how they can deliver the best viewing experience based on the limitations of each individual customer. As a result, Netflix stores multiple versions of each video, so they can send the best version that a customer can play.

Different videos encode differently, and sometimes codecs at one bitrate do well but at another bitrate perform poorly. In order to know which version of the video to send to the customer, Netflix developed VMAF.

How it works

At its core, VMAF provides a machine learning framework to tune a filter that assesses quality compared to an original. Basically, it detects differences, and predicts how people will rate the difference. You can access the source code for VMAF and train it specifically on videos of your choosing. I’ve used the release included in FFMPEG v4, although you still have to build it yourself with the flag enabled.

It’s not unusual for VMAF to give a score of 99 on an exact copy of a video. Technically, it should score 100. But due to the machine learning nature of the system, it is predicting people’s opinions. When showing a selection of people two identical videos, some of them will think they are NOT identical. Therefore, VMAF is correctly predicting that some people will rate the videos as different, even though those people are incorrect.

Examples

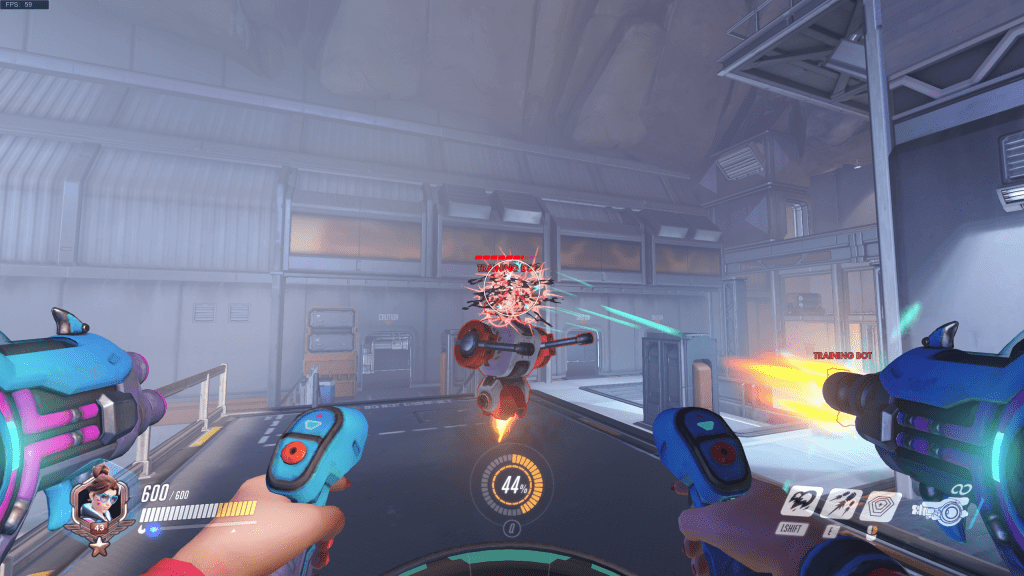

Below are some sample frames from the encoding results. I selected a frame that was not stationary because all codecs look better when there is no movement. There were a few frames that were more distorted, but they were rare and easy to miss. Using the most distorted frame would give an exaggerated impression. Therefore I chose something in the middle. You may notice that 2 of them don’t have the firing animation mid-air. This is a consequence of different encoding methods. In the actual videos, they all appear to be firing, it’s just split over different frames.

What to expect

I’m specifically referring to 2560×1440 60 fps Overwatch footage here. However, most of this applies in general, to some degree, even at 1080p real life footage.

- 95+ – Generally speaking, on a 1440p 60 fps video, a VMAF score of 94 or higher looks perfect. It is very hard for a viewer to know that the video they are seeing isn’t the original. In my personal opinion a VMAF of 95 looks as good as if I were actually playing Overwatch.

- 90-95 – This also looks fantastic. If a viewer can see the original and a 92 VMAF version side-by-side they may see a difference. But if a viewer only sees a 92 VMAF version, they may likely still think that the video is perfect.

- 85-90 – The videos start to look flawed. Pixelation and colour blocking are evident especially if the viewer is looking for them. You can usually tell the difference between a +/- 1 difference here. So an 85 looks clearly worse than an 86.

- 80-85 – More the kind of quality you typically expect from an average streamer. High-quality streamers may look better but there’s blockiness appearing everywhere.

- 70-80 – Text gets really hard to read.

- 60-70 – Starting to look really blocky, and difficult to see. Below 60 is terrible and therefore excluded from this article.

Agamemnus has a passion for gaming and an eye for tech. You can see him streaming occasionally on twitch.tv/unrealaussies and catch him on the Unreal Aussies Discord. Evidence > Opinion.